|

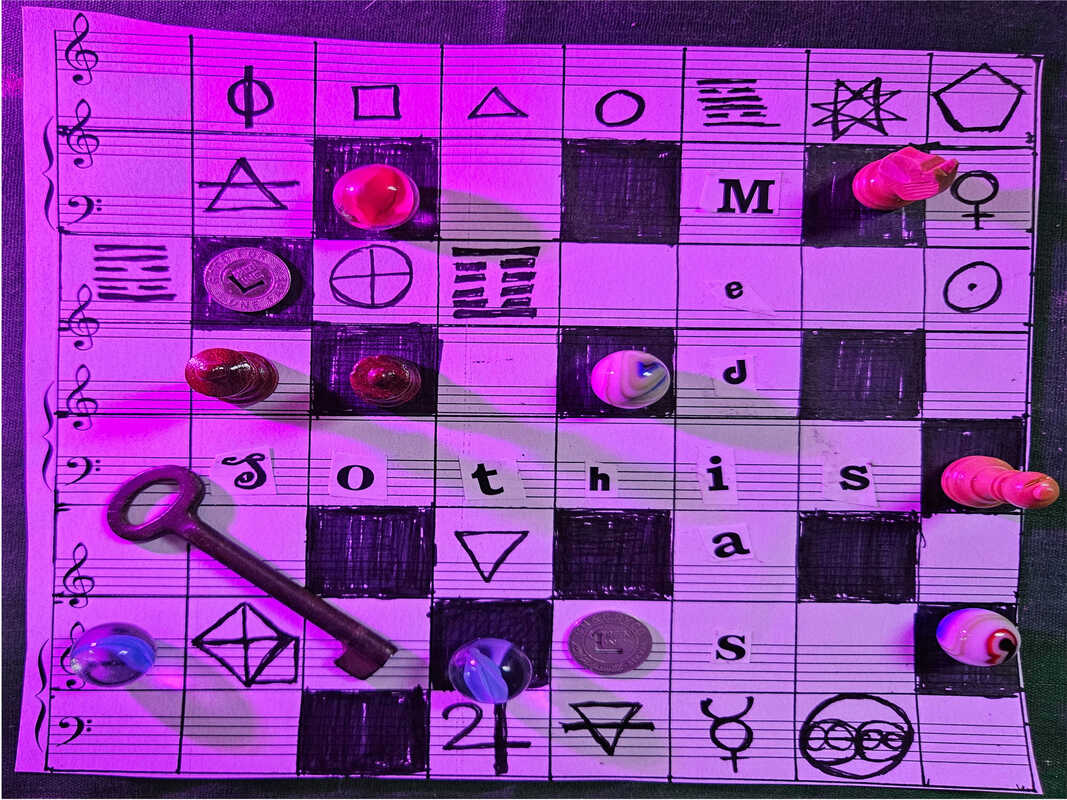

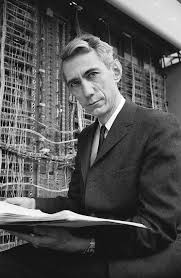

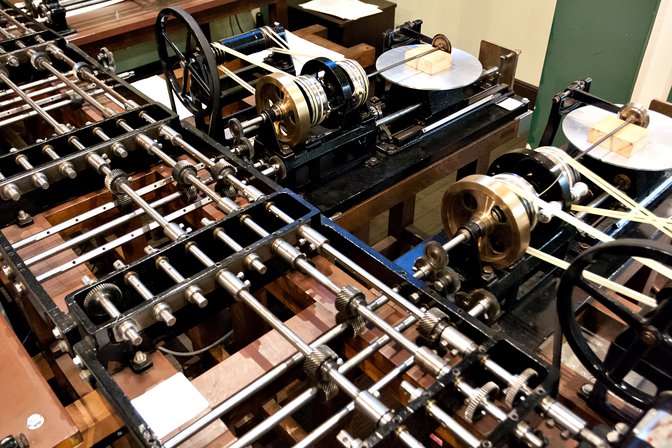

From the ice cold farms and fields of Michigan to the halls of MIT and then onwards to Bell Labs at Murray Hill, Claude Shannon was a mathematical maverick and inveterate tinkerer. In the 1920s, in those places where the phone company had not deigned to bring their network, around three million farmers built their own by connecting telegraph keys to the barbed wire fences that stretched between properties. As a young boy Shannon rigged up one of these “farm networks so he and one his friend who lived half a mile away could talk to each other at night in Morse code. He was also the local kid people in the town would bring their radios to when they needed repair and he got them to work. He had the knack. He also had an aptitude for the more abstract side of a math and his mind could handle complex equations with ease. At the age of seventeen he was already in college at the University of Michigan and had published his first work in an academic journal, a solution to a math problem presented in the pages of American Mathematical Monthly. He did a double major in school and graduated with degrees in electrical engineering and mathematics then headed off to MIT for his masters. While there he got under the wing of Vannevar Bush. Vannevar had followed in the footsteps of Lord Kelvin, who had created one of the world’s first analog computers, the harmonic analyzer, used to measure the ebb and flow of the tides. Vannevar’s differential analyzer was a huge electromechanical computer that was the size of a room. It solved differential equations by integration, using a wheel-and-disc mechanisms to perform the integration. At school he was also introduced to the work of mathematician George Boole, whose 1854 book on algebraic logic The Laws of Thought laid down some of the essential foundations for the creation of computers. George Boole had in turn taken up the system of logic developed by Gottfried Wilhelm Leibniz. Might Boole have also been familiar with Leibniz’s book De Arte Combinatoria? In this book Leibniz proposed an alphabet of human thought, and was himself inspired by the Ars Magna of Ramon Lull. Leibniz wanted to take the Ars Magna, or “ultimate general art” developed by Lull as a debating tool that helped speakers combine ideas through a compilation of lists, and bring it closer to mathematics and turn it into a kind of calculus. Shannon became the inheritor of these strands of thought, through their development in the mathematics and formal logic that became Boolean algebra. Between working with Bush’s differential analyzer and his study of Boolean algebra, Shannon was able to design switching circuits. This became the subject of his 1937 master thesis, A Symbolic Analysis of Relay and Switching Circuits. Shannon was able to prove his switching circuit could be used simplify the complex and baroque system of electromechanical relays used in AT&T’s routing switches. Then he expanded his concept and showed that his circuits could solve any Boolean algebra problem. He finalized the work with a series of circuit diagrams. In writing his paper Shannon took George Boole’s algebraic insights and made them practical. Electrical switches could now implement logic. It was a watershed moment that established the integral concept behind all electronic digital computers. Digital circuit design was born. Next he had to get his PhD. It took him three more years, and his subject matter showed the first signs of multidisciplinary inclination that would later become a dominant feature of information theory. Vannevar Bush compelled him to go to Cold Spring Harbor Laboratory to work on his dissertation in the field of genetics. For Vannevar the logic was that if Shannon’s algebra could work on electrical relays it might also prove to be of value in the study of Mendelian heredity. His research in this area resulted in his work An Algebra for Theoretical Genetics, for which he received his PhD in 1940. The work proved to be too abstract to be useful and during his time at Cold Spring Harbor he was often distracted. In a letter to his mentor Vannevar he wrote, “I’ve been working on three different ideas simultaneously, and strangely enough it seems a more productive method that sticking to one problem… Off and on I have been working on an analysis of some of the fundamental properties of general systems for the transmission of intelligence, including telephony, radio, television, telegraphy, etc…” With a doctorate under his belt Shannon went on to the Institute of Advanced Study in Princeton, New Jersey where his mind was able to wonder across disciplines and where he rubbed elbows with other great minds, including on occasion, Albert Einstein and Kurt Gödel. He discussed science, math and engineering with Hermann Weyl and John Von Neumann. All of these encounters fed his mind. It wasn’t long before Shannon went elsewhere in New Jersey, to Bell Labs. There he got to rub elbows with other great minds such as Thornton Fry and Alan Turing. His prodigious talents were also being put to work for the war effort. It started with a study of noise. During WWII Shannon had worked on the SIGSALY system that was used for encrypting voice conversations between Franklin D. Roosevelt and Winston Churchill. It worked by sampling the voice signal fifty times a second, digitizing it, and then masking it with a random key that sounded like the circuit noise so familiar to electrical engineers. Shannon hadn’t designed the system, but he had been tasked with trying to break it, like a hacker, to see what its weak spots were, to find out if it was an impenetrable fortress that could withstand the attempts of an enemy assault. Alan Turing was also working at Bell Labs on SIGSALY. The British had sent him over to also make sure the system was secure. If Churchill was to be communicating on it, it needed to be uncrackable. During the war effort Turing got to know Claude. The two weren’t allowed to talk about their top secret projects, cryptography, or anything related to their efforts against the Axis powers but they had plenty of other stuff to talk about, and they explored their shared passions, namely, math and the idea that machines might one day be able to learn and think. Are all numbers computable? This was a question Turing asked in his famous 1937 paper On Computable Numbers. He had shown the paper to Shannon. In it Turing defined calculation as a mechanical procedure or algorithm. This paper got the pistons in Shannon’s mind firing. Alan had said, “It is always possible to use sequences of symbols in the place of single symbols.” Shannon was already thinking of the way information gets transmitted from one place to the next. Turing used statistical analysis as part of his arsenal when breaking the Enigma ciphers. Information theory in turn ended up being based on statistics and probability theory. The meeting of these two preeminent minds was just one catalyst for the creation of the large field and sandbox of information theory. Important legwork had already been done by other investigators who had made brief excursions into the territory later mapped out by Shannon. Telecommunications in general already contained within it many ideas that would later become part of the theories core. Starting with telegraphy and Morse code in the 1830s common letters expressed with the least amount of variation, as in E, one dot. Letters not used as often have a longer expression, such as B, a dash and three dots. The whole idea of lossless data compression is embedded as a seed pattern within this system of encoding information. In 1924 Harry Nyquist published the exciting Certain Factors Affecting Telegraph Speed in the Bell System Technical Journal. Nyquist’s research was focused on increasing the speed of a telegraph circuit. One of the first things an engineer runs into when working on this problem is how to transmit the maximum amount of intelligence on a given range of frequencies without causing interference in the circuit or others that it might be connected to. In other words how do you increase speed and amount of intelligence without adding distortion, noise or create spurious signals? In 1928, Ralph Hartley, also at Bell Labs, wrote his paper the Transmission of Information. He made it explicit that information was a measurable quantity. Information could only reflect the ability of the receiver to distinguish that one sequence of symbols had been intended by the sender rather than any other, that the letter A means A and not E. Jump forward another decade to the invention of the vocoder. It was designed to use less bandwidth, compressing the voice of the speaker into less space. Now that same technology is used in cellphones as codecs to compress the voice and so more lines of communication can be used on the phone companies allocated frequencies. WWII had a way of producing scientific side effects, discoveries that would break on through to affect civilian life after the war. While Shannon worked on SIGSALY and other cryptic work he continued to tinker on other projects. Shannon’s paper was one of the things he tinkered and had profound side effects. Twenty years after Hartley addressed the way information is transmitted, Shannon stated it this way, "The fundamental problem of communication is that of reproducing at one point, either exactly or approximately, a message selected at another point." In addition to the ideas of clear communication across a channel Information theory also brought the following ideas into play: -The Bit, or binary digit. One bit is the information entropy of a binary random variable that is 0 or 1 with equal probability, or the information that is gained when the value of such a variable becomes known. -The Shannon Limit: A formula for channel capacity. This is the speed limit for a given communication channel. -Within that limit there must always be techniques for error correction that can overcome the noise level on a given channel. A transmitter may have to send more bits to a receiver at a slower rate but eventually the message will get there. His theory was a strange attractor in a chaotic system of noisy information. Noise itself tends to bring diverse disciplinary approaches together, interfering in their constitution and their dynamics. Information theory, in transmitting its own intelligence, has in its own way, interfered with other circuits of knowledge it has come in contact with. A few years later psychologist and computer scientist J.C. R. Licklider said, “It is probably dangerous to use this theory of information in fields for which it was not designed, but I think the danger will not keep people from using it.” Information theory encompasses every other field it can get its hands on. It’s like a black hole, and everything in its gravitational path gets sucked in. Formed at the spoked crossroads of cryptography, mathematics, statistics, computer science, thermal physics, neurobiology, information engineering, and electrical engineering it has been applied to even more fields of study and practice: statistical inference, natural language processing, the evolution and function of molecular codes (bioinformatics), model selection in statistics, quantum computing, linguistics, plagiarism detection. It is the source code behind pattern recognition and anomaly detection, two human skills in great demand in the 21st century. I wonder if Shannon knew when he wrote ‘A Mathematical Theory of Communication’ for the 1948 issue of the Bell Systems Technical Journal that his theory would go on to unify, fragment, and spin off into multiple disciplines and fields of human endeavor, music just one among a plethora. Yet music is a form of information. It is always in formation. And information can be sonified and used to make music. Raw data becomes audio dada. Music is communication and one way of listening to it is as a transmission of information. The principles Shannon elucidated are form of noise in the systems of world knowledge, and highlight one way of connecting different fields of study together. As information theory exploded it was quickly picked up as a tool among the more adventurous music composers. Information theory could be at the heart of making the fictional Glass Bead Game of Herman Hesse a reality. Herman Hesse also dropped several hints and clues in his work that connected it with the same thinkers whose work served as a link to Boolean algebra, namely Athanasius Kircher, Lull and Leibniz who were all practitioners and advocates of the mnemonic and combinatorial arts. Like its predecessors, Information Theory is well suited to connecting the spaces between different fields. In Hesse’s masterpiece the game was created by a musician as a way of “represent[ing] with beads musical quotations or invented themes, could alter, transpose, and develop them, change them and set them in counterpoint to one another.” After some time passed the game was taken up by mathematicians. “…the Game was so far developed it was capable of expressing mathematical processes by special symbols and abbreviations. The players, mutually elaborating these processes, threw these abstract formulas at one another, displaying the sequences and possibilities of their science.” Hesse goes on to explain, “At various times the Game was taken up and imitated by nearly all the scientific and scholarly disciplines, that is, adapted to the special fields. There is documented evidence for its application to the fields of classical philology and logic. The analytical study had led to the reduction of musical events to physical and mathematical formulas. Soon after philology borrowed this method and began to measure linguistic configurations as physics measured processes in nature. The visual arts soon followed suit, architecture having already led the way in establishing the links between visual art and mathematics. Thereafter more and more new relations, analogies, and correspondences were discovered among the abstract formulas obtained this way.” In the next sections I will explore the way information theory was used and applied in the music of Karlheinz Stockhausen. Read the rest of the Radiophonic Laboratory series. REFERENCES: A Mind at Play: How Claude Shannon Invented the Information Age by Jimmy Soni and Rob Goodman, Simon & Schuster, 2018 The Information: a history, a theory, a flood by James Gleick, Pantheon, 2011 The Glass Bead Game by Herman Hesse, translated by Clara and Richard Winston, Holt, Rinehart and Winston, 1990 Information Theory and Music by Joel Cohen, Behavioral Science, 7:2 (1962:Apr.) Information Theory and the Digital Age by Aftab, Cheung, Kim, Thakkar, Yeddanapudi Logic and the art of memory: the quest for a universal language, by Paolo Rossi, The Athlone Press, University of Chicago, 2000.

2 Comments

Lathechuck

10/8/2020 04:00:57 am

If you ever get the chance to see the film biography of Shannon: "Bit Player", don't miss it. It's haunting.

Reply

10/8/2020 06:43:17 am

Hi Chuck. Thanks for stopping by and checking out the article. I'm going to see if the library has "Bit Player" or otherwise find a way to watch it. (Great title for a movie about him.) I appreciate the recommendation. Hope you are well!

Reply

Leave a Reply. |

Justin Patrick MooreAuthor of The Radio Phonics Laboratory: Telecommunications, Speech Synthesis, and the Birth of Electronic Music. Archives

July 2024

Categories

All

|

RSS Feed

RSS Feed